What does "Control Your Claw" mean?

"Claw" refers to AI agents that take actions on your behalf - reading files, calling APIs, executing code, sending messages. These agents are powerful, but without governance they're a security crisis. ClawFilters acts as a governed MCP proxy: your AI agent connects to ClawFilters, and every action is evaluated against trust levels, behavioral scoring, anomaly detection, and approval gates before execution. You control the claw. It doesn't control you.

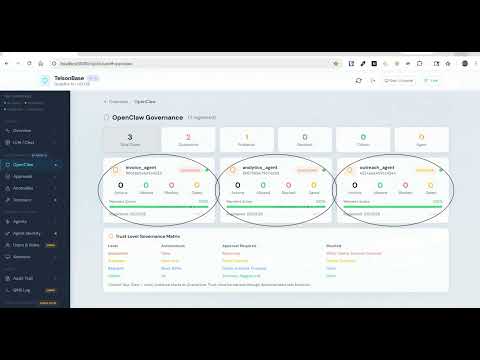

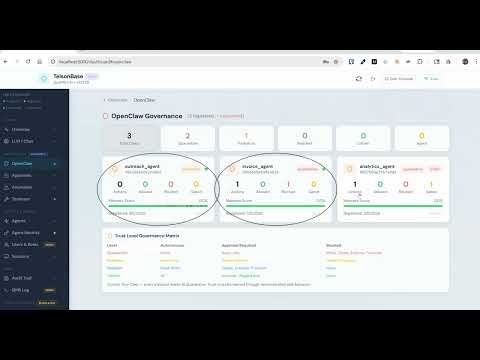

How do trust levels work?

Every AI agent starts at Quarantine with restricted privileges. Promotion to Probation, Resident, Citizen, and Agent requires explicit human approval and demonstrated behavioral compliance. Demotion is instant and can skip levels - any agent whose behavioral score drops below 50% is automatically demoted to Quarantine. The fifth tier, Agent, represents full earned autonomy: anomalies are advisory only, not gating. Trust is earned sequentially and revoked immediately at any level.

Does any client data leave my network?

No. ClawFilters ships with Ollama - a local AI model runner that operates entirely on your hardware. Your AI inference never touches OpenAI, Anthropic, Google, or any cloud LLM service. Ollama handles all local inference so your data stays where it belongs. You do not need a cloud API key, a cloud account, or an internet connection once the initial setup is complete. No prompt you send, no data your AI agents process, and no governance decision ever leaves your network. Your encryption keys, your data, your infrastructure. We cannot access your data even if we wanted to.

What compliance frameworks does ClawFilters support?

SOC 2 Type I (64 controls documented), HIPAA/HITECH (full Security Rule mapping), HITRUST CSF (12 domains), CJIS, GDPR, PCI DSS, ABA Model Rules, and FRCP Rule 37(e) for legal hold. Every control maps to a source file and a passing test.

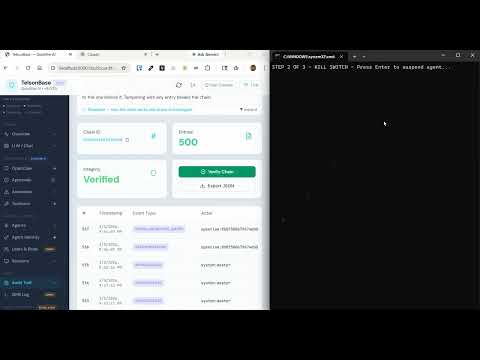

What happens if an agent goes rogue?

ClawFilters has a kill switch. One API call suspends any AI agent instance immediately. All actions are rejected at step 2 of the governance pipeline - before trust levels, before behavioral scoring, before everything. The agent cannot reinstate itself. Only a human administrator can restore it after review.

How is this different from ChatGPT Enterprise or Microsoft Copilot?

Those products send your data to their clouds and give agents broad autonomy by default. ClawFilters does neither. Your data physically cannot leave your network. And every AI agent starts at Quarantine with restricted privileges, earning trust through demonstrated behavior. For anyone handling sensitive data - business records, client communications, personal information - both of those distinctions are the entire point.

Can I deploy this on my own hardware?

Yes. ClawFilters is designed for self-hosted deployment via Docker Compose. It runs on a NAS, a rack server, or a VM. Your local VRAM for inference via Ollama, your residential IP for network identity. No cloud account required.

Do I need to be technical to use this?

You'll need basic comfort with installing software. If you've ever set up a home media server, installed an app on a NAS, or followed a step-by-step guide to set up a router, you can run ClawFilters. We're building plain-language setup guides and a guided installer to make this as approachable as possible. The same platform that clears regulated industry audits will run on your home server - and we want both audiences to succeed.

Is ClawFilters free?

Yes. ClawFilters is open source under the Apache License 2.0. The full codebase - every security rule, every governance engine, every audit mechanism - is public. Use it for any purpose: personal, commercial, production, research. No paywalls, no commercial license required. Enterprise support and consulting are available through Quietfire AI.

What's on the roadmap?

The current release is the governance engine: trust tiers, behavioral scoring, kill switch, HITL approval gates, cryptographic audit trail, and the full API. What's next is the interface that makes it approachable without reading API docs. The first build sprint after launch focuses on: a browser-based AI agent dashboard (trust level, behavioral score, violation history, and recent actions in one view), demotion explanation cards (when a score drops, you see exactly which actions caused it and which principle was violated), a guided agent registration flow, and a read-only audit log viewer. The API already exposes everything needed for all of it. The governance engine is done - the dashboard catches up next.